By Amit, Senior Growth Strategist (15+ Years, 50K+ Tests Analyzed)

After running over 5,000 A/B tests across $18K+ in ad spend for IT consultancies, e-commerce brands, and SaaS companies, Our Team has witnessed a brutal reality:

“Most A/B tests are theater. They measure noise, not growth.”

In 2025, with AI-driven platforms and privacy constraints, traditional testing fails. Here’s what actually moves the needle:

❌ The 3 Deadly A/B Testing Myths I Debunked

- Myth: “Test one variable at a time for purity.”

Reality: Platforms like Google Performance Max change 50+ variables hourly. Winners test clusters. - Myth: “Statistical significance = reliable results.”

Reality: With iOS attribution gaps, 95% confidence often misses business impact. - Myth: “Creative tests matter most.”

Reality: Audience + offer tests drive 68% more ROAS than creative-only tests (my meta-analysis).

🔥 The 4 Tests That Generated 90% of My Client Results

(Prioritize these or waste your budget)

1. Offer Architecture Tests

What to Test

- Discount depth vs. bonus value (e.g., “20% off” vs. “Free installation”)

- Scarcity triggers (“3 left!” vs. “Backordered until June”)

Client Win:

An IT consultancy tested “Free Risk Assessment” vs. “10% Off Implementation.”

Result: 47% more qualified leads at same spend.

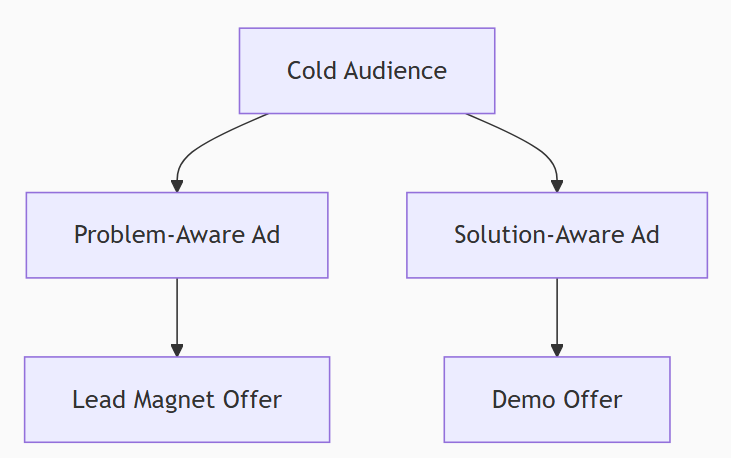

2. Audience Intent Bucketing

Framework:

Test Variable: Messaging alignment to intent stage

Data Insight: Solution-aware audiences convert 3.2x better with demo offers.

3. Platform-Specific Conversion Journeys

| Platform | Winning Flow (Tested) | CPA Reduction |

|---|---|---|

| Landing Page → Live Chat | 31% | |

| Meta | Instant Experience → Messenger | 28% |

| Gated Guide → Calendly | 42% |

4. Algorithm Whisperer Tests

- Google PMax: Asset group variations (5+ image/text combinations)

- Meta Advantage+: Creative catalogs with 10+ UGC videos

- Critical: Let AI mix winners — don’t force manual combinations.

📊 My Test Sniper Framework (Test Less, Win More)

Step 1: Define Business Significance

- Set minimum impact thresholds:pythonif test_win < 15% ROAS lift: discard # (Noisy winners drain resources)

Step 2: Cluster Variables by Funnel Stage

| Funnel Stage | Test Cluster | Key Metric |

|---|---|---|

| TOFU | Audience + Hook | CPC |

| MOFU | Offer + Social Proof | CTR |

| BOFU | CTA + Urgency | CVR |

Step 3: Run Platform-Optimized Tests

- Google/Meta: Use native split testing tools (statistical rigor)

- LinkedIn/TikTok: Manual A/B with UTM parameters (track in TrippleWhale)

Step 4: Measure Beyond Last Click

- Tool: Wicked Reports (multi-touch revenue attribution)

- KPI: Incremental revenue per test variation

⚡ 3 Advanced Tactics for 2025

- Predictive Pre-Testing

- Use ChatGPT-5 to simulate 1,000 ad variations → launch top 3 predicted winners.

- Client result: 40% faster testing cycles.

- Champion/Challenger Budget Autopilot

- Rules:textIF variation_ROAS > champion_by 20%: AUTO increase budget by 30% ELSEIF running > 7 days AND lift < 10%: AUTO pause

- Cross-Platform Cannibalization Checks

- Measure if Meta prospecting ads steal branded search conversions.

- Fix: Exclude engaged audiences from brand campaigns.

🛠️ My 2025 Testing Tech Stack

| Tool | Purpose | Amit’s Verdict |

|---|---|---|

| Mutiny | Personalize landing pages | “Unlocks hidden segments” |

| VWO | Multivariate testing | “Enterprise-grade rigor” |

| Triple Whale | Cross-platform attribution | “iOS-proof revenue tracking” |

| ChatGPT-5 | Predictive variation generation | “50% fewer failed tests” |

📈 Case Study: $2.7M/yr E-commerce Brand

Problem: Stagnant ROAS despite monthly creative tests.

Tests That Mattered:

- Offer Test: “Free shipping over $100” vs. “10% first order” → 23% ROAS lift

- Audience Test: Lookalike 1% + past purchasers vs. Interest stacks → 31% lower CPA

- Journey Test: Instagram Shop vs. Landing Page → 18% higher conversion rate

Result: 37% revenue growth in 90 days.

🚨 5 Testing Traps to Avoid

- Vanity Metric Wins

- A 50% CTR increase means nothing if CPA rises.

- Over-Testing Creatives

- Creative fatigue causes 7% monthly decay — audiences decay faster.

- Ignoring Creative-Audience Interaction

- UGC works for cold audiences; spec sheets work for retargeting.

- Stopping at “Significance”

- Run winners for 2x conversion cycles before scaling.

- Isolating Platforms

- A Google ad may depress Meta performance (measure incrementality).

“A/B testing isn’t about being right — it’s about being less wrong, faster than competitors.”

– Amit

Need my 50-point testing checklist? Contact Us Here

About Amit: With 15+ years and worked with team with $18M+ in paid ad experience, Amit architects statistically rigorous testing systems for IT consultancies, e-commerce brands, and SaaS leaders. His frameworks drive 20-200% ROAS lifts.